AI-Powered Sky Replacement Tool Coming to Adobe Photoshop

Sky replacements have long been standard practice in architectural photography. However, the existing tools to do so remain, in my opinion, somewhat haphazard. Some skies are an easy slam-dunk, capable of being replaced in a few clicks using something as simple as the Tragic Wand or Quick Selection tool. Others require multiple layers and passes of channel masking or other manual processes that, however ultimately effective, can be time-consuming, tedious, and highly technical. Developers have attempted to serve this need from an action- or plugin-oriented perspective, bringing both free and paid solutions to the table, with unfortunately mixed results. If you’ve been in this genre for a while, you probably know them all. PPA Sky Swap actions, Landscape Pro software, and other more generalized tools such as those designed for luminosity masking have all thrown their hats into the ring to compete in this arena. Some of these tools produce impressive results under the right conditions, but in my opinion, all fall short in one way or another.

AI (Artificial Intelligence) has quickly entered the lexicon of photography post-production. I recently tried a promising AI-based app, Luminar 4 by Skylum Software. For the most part, it did live up to its hype in the sky replacement department; however, the idea of trying to integrate this into my existing Photoshop workflow (especially since I would have to commit all changes before returning the image to Photoshop) seemed to negate the convenience of having a more automated solution. Luminar is potentially quite powerful, but for my needs, it does not replace the functionality I need from Photoshop. Skylum also recently announced that it was moving to a subscription-based model for future versions of Luminar, which seems quixotic since a one-time purchase was one of its previously most attractive attributes for users thinking of jumping ship from Adobe’s Creative Cloud subscription. Once Adobe releases the Sky Replacement tool, this many retain many users who were otherwise considering switching to Luminar.

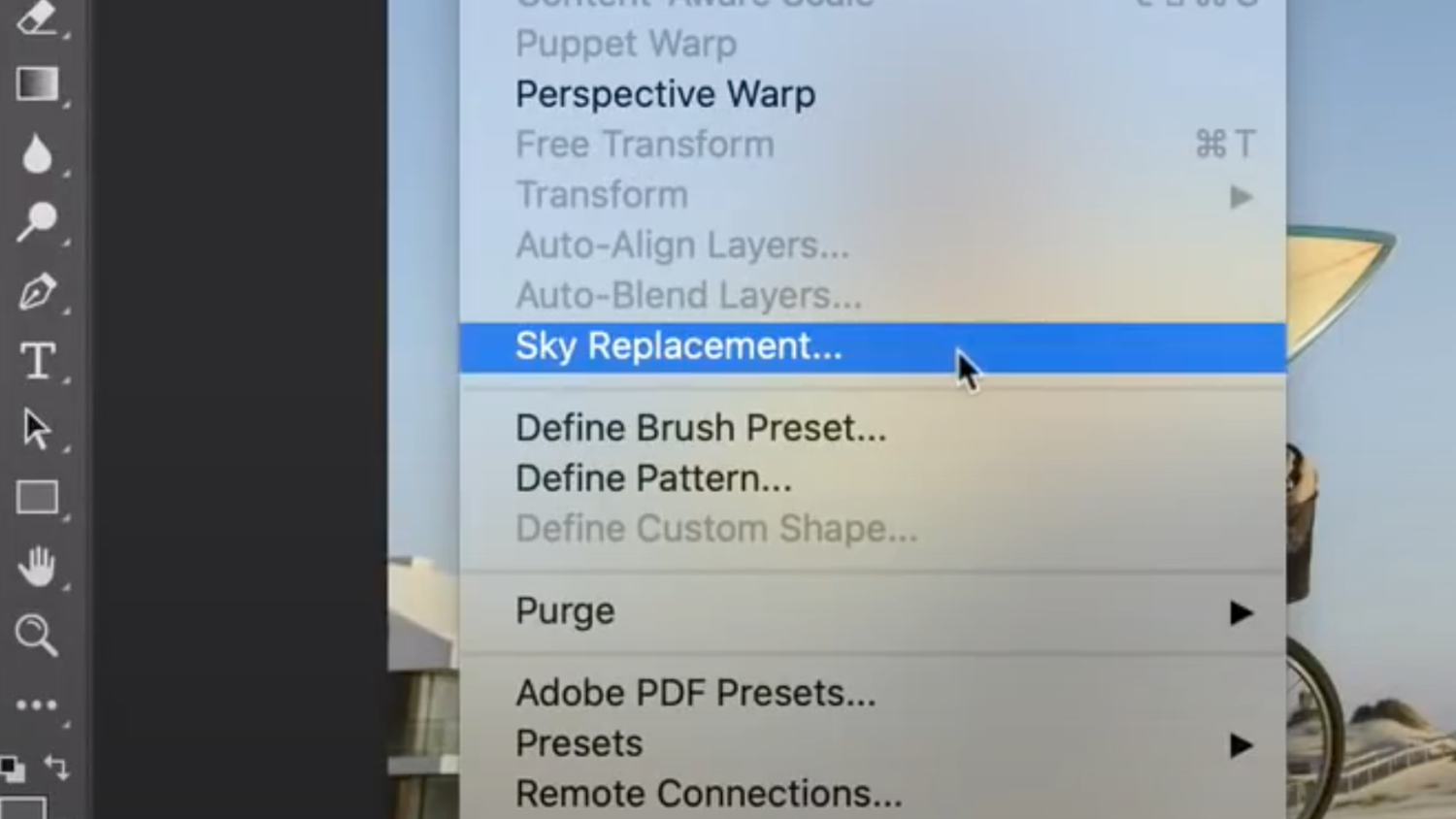

Adobe’s forthcoming Sky Replacement tool is powered by Adobe Sensei, Adobe’s AI engine. The tool comes with its own configurable menu for previewing sky options and adding assets from your own collection. Built-in sliders allow for refining adjustments before committing the results. My favorite part of this new feature is the ability to automatically recolor the rest of the scene in order to more organically coordinate with the chosen sky. The color characteristics can even change just by revealing different parts of the same sky.

As exciting as this tool might possibly be, there are some concerns. In general, I noticed that most of the example images in the video had crisp, clearly defined edges, with plenty of contrast between the sky and foreground elements. Under these conditions, any existing tool should be able to create a relatively believable sky replacement, notwithstanding the ability to adjust foreground colors to match. Even so, I did notice some apparent haloing on at least one of the examples (around 1:26), though the edges themselves seemed crisp. This may have been user error since I noticed the “fade edge” slider was set to the maximum amount. There do not appear to be any tools designed for the user to “assist” the detection of sky edges, such as something similar to the Refine Edge tool in Select and Mask, prompting the question of how the masks can be adjusted (other than manually) whenever the AI engine inevitably produces an incorrect result. Around 1:38, there are what appear to be distant mountains on the left side of the image which are removed as the sky is moved around. It looks like these mountains were actually part of the sky image the user selected, but it does raise the question.

For all the questions raised by the demo video, the workflow is at least non-destructive, adding new layers and masks in a well-organized group once the user’s selections are confirmed. Despite this, one apparent behavior that does leave me in want of further enhancement is that many of the user’s selections are fully committed in the newly created layers, including the sky, its position within the frame, and the masks themselves. The color adjustments are on adjustment layers, so they can be edited, but ostensibly in a much more manual way, rather than being able to re-invoke the AI engine to assist with the settings in the event the user later swaps the sky for a different option. I’d agree the process is “non-destructive,” but for me, a workflow more similar to Smart Filters would be more preferable.

I’m not yet convinced that this new tool will completely replace the existing, more technical tools we regularly work with for sky replacements. However, Adobe has made remarkable improvements over time to features it previously released, so I am confident that it will, at least eventually, be a formidable tool when stacked up against other options. What do you think about this upcoming feature?