Artist Refik Anadol’s Immersive Installations Blend Architecture, Photography, and Machine Intelligence

Earlier this year during the Melbourne Design Week, Turkish born, LA-based visual artist Refik Anadol had given a talk about his experiences in merging art, science and technology together to explore architecture using machine-made moving images. The core of this work focuses on machine intelligence which is a topic that I had briefly explored during my undergraduate studies and have had a great interest in. Having built my career in technology and now in architectural photography, Anadol’s work fascinates with its ability to accentuate and elevate architecture through the combination of technology and art.

Originally from Turkey, Anadol graduated with an arts degree from Bilgi University in Istanbul specialising in media arts (with an interest in architectural photography). One of his first attempts in the projection of moving images, Augmented Structures v1.0, was part of the 2011 Istanbul Biennial. The hypothesis of his project was to determine a method that allows the translation of media into architecture by fusing sound, visual arts and architecture together. He drew inspiration from a collaboration between French composer, Edgard Varèse, and Swiss architect, Le Corbusier, from their show (Poème Électronique) that exhibited at the Philips Pavillion in 1958. By taking field recordings of a popular shopping strip, Istiklal Street, Anadol and his collaborators converted sound into a mathematical representation, the mathematics into architecture and the architecture converted into a living canvas.

To further enhance his understanding on this type of visual representation, he moved to LA and enrolled into UCLA to work on his second Masters in Fine Art where he was mentored by the likes of Casey Reas, Jennifer Steinkamp and Christian Moeller. As part of his Masters’ dissertation, he wanted to incorporate an iconic Los Angeles landmark as part of his project. As a photographer with a strong interest in architecture, he was inspired by the works of Frank Gehry and notably Gehry’s Walt Disney Concert Hall.

Ever since discovering the collaboration between Varese and Le Corbusier, Anadol has been captivated by one of Edgard’s compositions, Amériques. For his project, Visions Of America: Amériques, Anadol and his team developed an algorithm that analysed the music being played in real-time and used the interior architecture of the music hall as a canvas to project the music as animated graphics. The real-time analysis of music was also intertwined with analysing the movements of the conductor being projected into the walls of the concert hall. This resulted in an immersive experience that went beyond listening to the composition but visually seeing ephemeral interaction between the conductor and Varèse’s music.

In 2016, he took up an artist’s residency as part of Google’s Artists and Machine Intelligence Group to learn about AI which was a pivotal moment in his career. The residency resulted in his first installation project, Archive Dreaming, using machine intelligence which later was followed up by Melting Memories.

In 2018, Anadol was commissioned by Los Angeles Philharmonic to help them create a light show that would celebrate their 100th anniversary. For this project, WDCH Dreams, Anadol worked with Google’s Artists and Machine Intelligence Group to leverage their expertise in machine intelligence to sift through LA Phillharmonic’s 45TB archival material(587,763 image files, 1,880 video files, 1,483 metadata files, and 17,773 audio files (the equivalent of 40,000 hours of audio from 16,471 performances)).

Anadol and his team employed numerous machine learning algorithms to identify patterns in the memories (archival material) to create the dreams (the resultant output of the algorithms). These dreams, albeit created by a machine, were created to mimic how humans dream, thus giving the building consciousness. These dreams were projected on to the facade of the concert hall through 42 projectors installed on the perimeter of the complex; in a sense using photography to adorn architecture.

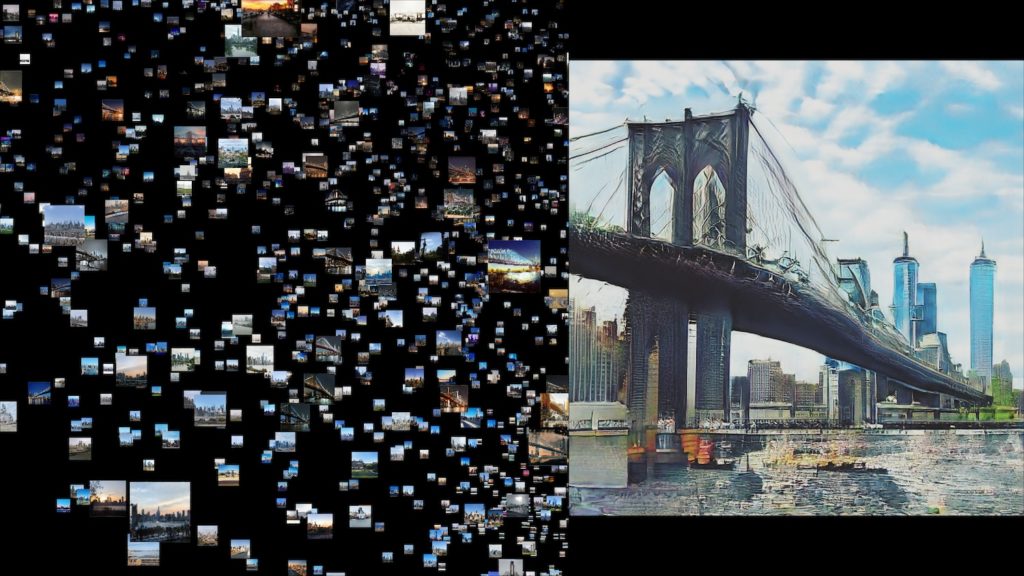

Anadol’s latest body of work, Machine Hallucination, is currently on exhibition in NYC. This work is focused on the exploration of the architecture of New York-based on past and present memories. Anadol’s definition of memories is of images taken on phones that exist online.

Initially, Anadol and his team began by exploring over 100 million publicly available photographs of New York using a custom algorithm that trawled through the public domain. These images were filtered to remove any people from the images so that the emphasis could be placed on the architecture itself resulting in a final set of eight million images.

The soundtrack to this exhibit was generated by a recurrent neural network that used publicly available recordings of the city’s sounds to train the algorithm. Finally, the audio and imagery are fed into a final algorithm, generative adversarial networks, which uses the two data inputs to output a 30-minute cinematic experience whose narrative is entirely generated by a machine. Anadol describes this final output as the machine’s own dream of New York through past and present references of what it may look in the near future. Finally, this is experienced in a true three-dimensional space whereby the dreams are projected upon the walls, ceilings and floors, engulfing you inside the mind of a machine.

If you happen to be in New York and are interested in visiting Anadol’s current exhibition, the details can be found here.